What Changes in Customer Communication When AI Handles First Response

Over the past five years, customer communication has undergone structural shifts as AI moved from basic FAQ deflection to becoming the first entity customers interact with, fundamentally altering latency, response quality, and escalation dynamics.

Over the past five years, customer communication has quietly undergone a structural shift.

In 2018-2019, AI in customer service largely meant scripted chatbots deflecting basic FAQs. In 2023-2025, that changed. Large language models and agentic orchestration systems moved AI from “deflection tool” to first-line responder.

Today, in many enterprise environments, AI is no longer assisting the support team. It is the first entity customers speak to.

That single architectural shift — AI handling first response — alters three fundamental variables in customer communication:

- Latency

- Response quality

- Escalation dynamics

And together, those variables directly influence revenue and customer lifetime value.

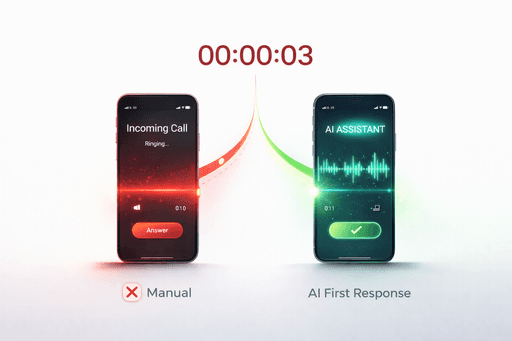

Latency Collapses — and That Changes Behavior

Customer response time has always mattered. What changed in the AI era is the threshold of tolerance.

Before widespread AI deployment, customers expected minutes or hours. In live chat, median first-response time in enterprise environments often ranged between 2-8 minutes during peak load. Email queues could stretch to 6-24 hours.

When AI handles first response, latency drops to seconds.

This is not marginal optimization. It is behavioral transformation.

In 2022, Shopify reported that merchants using AI-driven chat assistants during peak sales periods reduced median response times from multiple minutes to under 10 seconds. The measurable effect was not just satisfaction — it was conversion. Fast response during checkout hesitation reduced abandonment rates in high-intent sessions.

Similarly, H&M’s 2021-2022 AI assistant rollout across digital channels cut initial response time by roughly 3x compared to human-only chat during busy periods. Internal reporting indicated conversion rates increased during chatbot-led interactions.

Latency influences perception of reliability. In behavioral economics terms, instant response reduces cognitive drift — the moment when a customer abandons intent and shifts attention elsewhere.

AI-first communication removes that drift window.

For e-commerce and service businesses, that is not a support metric. It is a revenue lever.

Response Quality Becomes Structured, Not Individual

The second change is subtler.

Human-first response quality varies by agent, shift, fatigue level, and training consistency. Even well-trained teams produce variability.

When AI handles first response, variability decreases.

Morgan Stanley’s internal GPT-4-powered assistant (rolled out broadly in 2023 after pilots in 2022) demonstrated something instructive. By indexing over 100,000 internal research documents into a structured retrieval system, the firm enabled advisors to access consistent, context-aware answers instantly. Adoption rates reached above 90% among advisors because the system reduced search time and increased informational confidence.

In customer communication contexts, similar retrieval-augmented architectures ensure that:

- Policies are answered consistently

- Pricing explanations are standardized

- Returns and logistics details are aligned with current data

This does not mean AI is “smarter” than humans. It means the knowledge layer is centralized and structured.

Quality shifts from personality-driven to system-driven.

For enterprises, this reduces compliance risk and messaging drift. In regulated industries — banking, insurance, healthcare — this consistency is critical.

Escalation Becomes Architectural, Not Reactive

Perhaps the most underestimated shift occurs in escalation dynamics.

In traditional support models, escalation depends on agent judgment. The frontline agent decides whether to transfer a case.

When AI handles first response, escalation is governed by logic.

Modern AI-first systems (2023 onward) increasingly use confidence scoring, intent detection, and workflow branching to determine when a human should intervene. Escalation is no longer emotional or subjective — it is threshold-based.

American Express’s AI support systems, expanded significantly during 2020-2023, reduced live-agent load by automating high-frequency queries. However, critical or high-value interactions were routed immediately to human agents. This hybrid model preserved service quality while reducing cost.

The key operational shift: escalation becomes a design decision.

COOs now define:

- At what confidence score does AI escalate?

- Which transaction types bypass AI entirely?

- Which customer segments receive human-first routing?

This turns customer communication into a controlled flow rather than a queue.

Conversion Is the Hidden KPI

Many organizations evaluate AI-first response primarily through cost reduction: call deflection, lower headcount growth, shorter handling time.

That is incomplete.

The more significant impact often appears in conversion metrics.

Consider three mechanisms:

1. Checkout Hesitation Handling

AI can intervene in-session, answer sizing or delivery questions instantly, and prevent abandonment. Retail benchmarks show abandoned cart recovery systems can recover 15-30% of otherwise lost transactions when deployed correctly.

2. Lead Qualification Speed

In B2B contexts, AI-first response to inbound inquiries reduces lead cooling. Studies across SaaS sectors show that responding within 5 minutes increases qualification likelihood dramatically compared to longer delays. AI collapses that response gap to near-zero.

3. Post-Purchase Confidence

Immediate answers to shipping, returns, or product usage questions reduce refund requests and improve retention.

Latency reduction + structured quality + controlled escalation = measurable revenue stabilization.

The Operational Side Effect: Workforce Redesign

Between 2020 and 2024, enterprises learned that AI-first communication does not eliminate human roles — it redistributes them.

Entry-level repetitive inquiries decline. Complex, emotionally nuanced, or high-value interactions remain human-driven.

The support workforce shifts upward in complexity.

This creates two second-order effects:

- Training requirements increase — agents handle fewer but harder cases.

- AI observability becomes essential — leaders must monitor AI-human transitions.

Companies that treat AI-first communication as “automation” often miss this redesign requirement. Companies that treat it as “workflow re-architecture” manage the shift more effectively.

A Timeline of the Shift

- 2018-2019: Scripted chatbots primarily used for FAQ deflection.

- 2020-2021: Pandemic-driven acceleration of digital support; increased investment in conversational AI.

- 2022: LLM capabilities expand; enterprises begin experimenting with generative AI for support and knowledge retrieval.

- 2023-2024: AI becomes first-response layer in e-commerce and financial services; hybrid human-AI escalation models mature.

- 2025 onward: AI-first communication becomes baseline expectation in high-volume digital environments.

We are no longer in experimentation phase. We are in architecture phase.

What Actually Changes

When AI handles first response, customer communication changes from:

Human queue -> Variable quality -> Reactive escalation

to:

Instant system -> Structured knowledge -> Designed escalation

The transformation is not cosmetic. It alters:

- Customer perception of responsiveness

- Conversion probability under hesitation

- Cost structure of support

- Workforce composition

- Compliance control

Most importantly, it shifts communication from being a cost center to being an operational layer tightly linked to revenue.

The Strategic Question for 2026

The question is no longer whether AI should handle first response.

The real question for enterprise leaders is:

Is your first-response layer designed deliberately — or did it evolve organically?

Because once AI becomes the front door of customer interaction, it ceases to be a support tool. It becomes infrastructure. And infrastructure must be engineered with the same rigor as payment systems or logistics pipelines. Customer communication is no longer just conversation. It is conversion architecture.